Contradictions Are Not Errors

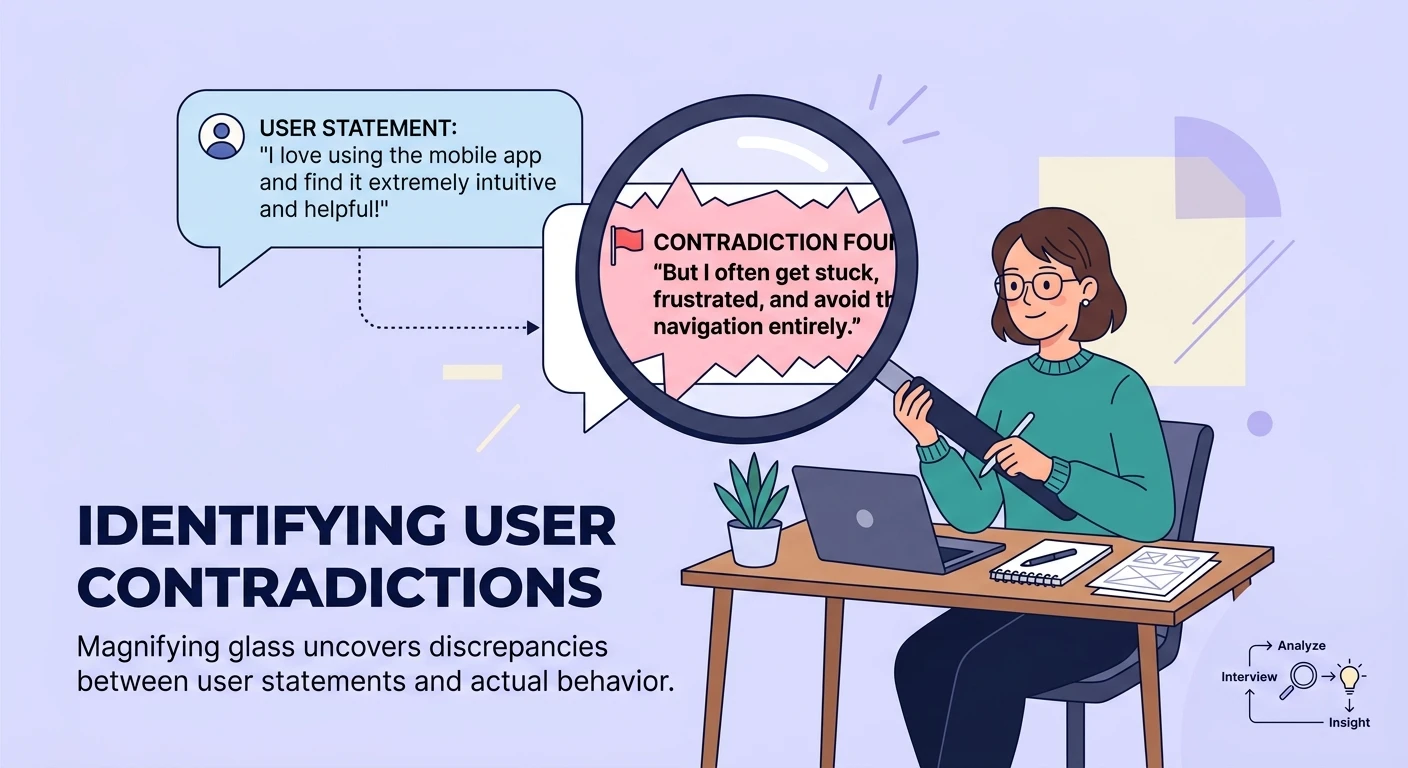

A product manager tells you the onboarding flow is intuitive. Twenty minutes later, they describe spending forty-five minutes figuring out a core feature on their first day. A healthcare administrator says they trust the AI recommendations completely, then reveals they manually verify every single output before acting on it.

Most researchers note these contradictions and move on. Some flag them as data quality issues. A few dismiss the participant as unreliable.

All of these responses are wrong.

Contradictions in qualitative interviews are not noise -- they are the most concentrated source of insight in your data. They reveal the gap between how people think they behave and how they actually behave, between stated preferences and revealed preferences, between the narrative someone has constructed about their experience and the messy reality underneath.

The challenge is that contradictions are hard to detect at scale, easy to miss in real-time, and almost impossible to systematically analyze without deliberate methodology.

Why People Contradict Themselves

Understanding why contradictions occur is essential to interpreting them correctly.

Identity-behavior gaps. People have strong self-concepts that do not always match their behavior. A user who identifies as tech-savvy will describe themselves as comfortable with complex tools, even when their actual usage patterns reveal significant struggle. The contradiction between "I am good at this" and "here is what actually happened" is one of the richest veins in qualitative research.

Temporal framing shifts. How participants describe an experience changes depending on whether they are recalling the overview or narrating specific moments. The overview tends to be positive and smoothed -- people remember outcomes and general impressions. The moment-by-moment narration captures friction, confusion, and frustration that the summary glosses over. Both are true, but they contradict each other.

Social context sensitivity. Participants adjust their responses based on who they think is listening and what they think the research is for. Early in an interview, before rapport is established, responses skew toward socially desirable answers. Later, as comfort increases, more honest assessments emerge -- often contradicting what was said fifteen minutes earlier.

Genuine ambivalence. Sometimes people simply hold contradictory views. They love a product AND find it frustrating. They want more features AND want simplicity. These contradictions are not confusion -- they reflect the real complexity of human experience that binary survey questions can never capture, which is exactly why the empathy gap in product analytics leads teams astray.

A Framework for Contradiction Detection

Systematic contradiction detection requires both real-time awareness during interviews and post-hoc analysis across transcripts.

During the Interview

Listen for qualifier shifts. When a participant shifts from unqualified statements ("It works great") to qualified ones ("It works great for the basic stuff, but..."), a contradiction is forming. The qualifier reveals the boundary condition that the initial statement did not acknowledge.

Track behavioral vs. attitudinal statements. Maintain a mental ledger of what the participant says they do versus what they describe doing. "I always check the dashboard first thing" contradicted by "Well, on most days I skip the dashboard and go straight to the task list" is a signal worth probing.

Probe without confrontation. When you detect a contradiction, do not point it out directly. Instead, revisit the earlier statement naturally: "Earlier you mentioned the onboarding was intuitive -- can you walk me through your first day?" Let the participant discover and resolve the contradiction themselves. Their resolution is often more insightful than the contradiction itself.

Post-Interview Analysis

This is where AI becomes genuinely transformative for qualitative research.

Semantic contradiction mapping. AI tools can compare every statement in a transcript against every other statement and flag pairs where the semantic content conflicts. This is not about keyword matching -- it requires understanding that "I trust the system" and "I always double-check its output" are contradictory in context.

Cross-participant contradiction analysis. When participant A says "Everyone on our team uses the reporting module" and participant B from the same team says "Nobody really looks at the reports," you have a contradiction that reveals something neither participant could tell you individually. Turning stakeholder interviews into strategic intelligence depends on finding exactly these cross-participant discrepancies.

Longitudinal contradiction tracking. In studies with multiple touchpoints, tracking how participants' statements evolve -- and where they contradict their earlier selves -- reveals attitude shifts, adoption curves, and changing mental models that single-session research cannot capture. This is where longitudinal qualitative research with AI truly shines.

Contradiction Taxonomies

Not all contradictions carry equal weight. Categorizing them helps prioritize analysis.

Type 1: Aspiration-Reality contradictions. "I want X" contradicted by behavioral evidence that the participant consistently chooses not-X. These reveal product positioning opportunities -- the gap between aspiration and behavior is where features can create value.

Type 2: Stated-Revealed preference contradictions. What people say they prefer versus what their actions demonstrate they prefer. These are critical for validating product assumptions without running a full study because they expose where survey data and behavioral data will diverge.

Type 3: Internal narrative contradictions. Contradictions within a participant's own story that reveal unresolved tensions or experiences they have not fully processed. These are the highest-value findings because they point to problems participants cannot articulate directly.

Type 4: Social performance contradictions. Early sanitized responses contradicted by later authentic ones. These tell you less about the product and more about the research context -- but they are important for understanding how AI-powered governance frameworks intersect with human reporting behaviors in enterprise settings.

Building Contradiction Detection Into Your Workflow

The teams that extract the most value from qualitative research have contradiction detection built into their standard operating procedures.

Tag contradictions in your analysis tool. Add a "contradiction" tag or code to your qualitative analysis taxonomy. When you can search your repository for all flagged contradictions across studies, patterns emerge that are invisible at the individual transcript level.

Report contradictions explicitly. In research readouts, do not smooth over contradictions to create a clean narrative. Present the contradiction itself as a finding: "Participants described the workflow as simple in summary but detailed significant friction in step-by-step narration. This suggests perceived simplicity masks real usability issues."

Quantify contradiction frequency. Track the percentage of interviews that contain significant contradictions on key topics. If 70% of participants contradict themselves about a specific feature's usefulness, that is a stronger signal than any satisfaction score -- it tells you the feature is generating cognitive load that participants are struggling to reconcile.

The Bottom Line

Consistency in qualitative data should make you suspicious, not confident. When every participant tells a smooth, contradiction-free story, you are probably hearing rehearsed narratives, socially desirable responses, or surface-level reflections that have not been probed deeply enough.

The most valuable insights live in the gaps between what people say and what they do, between their first answer and their third, between the story they tell about themselves and the story their behavior tells about them.

Build your research practice around finding those gaps. They are where the real product decisions hide.