The False Binary That Is Holding Research Teams Back

The discourse around AI in qualitative research has devolved into camps: AI enthusiasts who claim automated interviews will replace human moderators entirely, and traditionalists who insist that no algorithm can replicate the nuance of human conversation.

Both are wrong. And the teams producing the best consumer insights in 2026 have moved past this debate entirely.

The question is not "AI or human?" -- it is "which method, for which question, at which stage of the research process?" That reframing transforms AI from a threat into a multiplier. The organizations getting this right are not choosing sides. They are building research programs that deploy each modality where it has a structural advantage.

Where AI-Moderated Interviews Excel

AI moderation is not a cheaper substitute for human moderation. It is a fundamentally different research instrument with its own strengths. Understanding these strengths -- rather than treating AI as "budget human" -- is what separates effective hybrid programs from those that produce mediocre results with both methods.

Scale Without Sacrificing Depth

A human moderator can conduct 4-6 depth interviews per day before fatigue degrades quality. An AI moderator can run hundreds simultaneously, each with full adaptive probing, consistent follow-up quality, and no interviewer fatigue effects. This is not marginal efficiency -- it is a category shift.

When you need to understand a phenomenon across 50+ participants to identify segment-level patterns, AI moderation makes that feasible within the timeline and budget that previously limited you to 12-15 conversations. As we explored in our analysis of AI-moderated interview best practices, the technology has matured to the point where adaptive probing produces genuinely useful follow-up questions.

Consistency Across Conversations

Human moderators drift. By interview eight, they unconsciously lead toward emerging hypotheses. They probe topics that interest them personally. They spend more time with articulate participants. These are not flaws -- they are features of human cognition. But they introduce systematic variation that complicates cross-participant analysis.

AI moderators apply the same discussion guide logic to every conversation. Every participant gets the same depth of probing on every topic. This consistency makes comparative analysis dramatically more reliable, particularly when you are looking for subtle differences across segments or markets. The research on moderator bias in AI-assisted interviews documents how this consistency improves data quality for specific research designs.

Asynchronous Access to Hard-to-Reach Participants

Executives, healthcare professionals, shift workers, parents of young children, participants in different time zones -- these populations share one characteristic: synchronous 60-minute interviews are logistically brutal to schedule. No-show rates for these segments regularly exceed 35%.

AI-moderated interviews unfold asynchronously. Participants respond when they have time -- at 11pm after the kids are asleep, during a break between surgeries, across three sessions over a week. The rise of asynchronous research is not about convenience. It is about accessing populations that synchronous methods systematically exclude.

Sensitive Topics Where Anonymity Reduces Bias

Financial struggles, health conditions, addiction, workplace dissatisfaction, body image -- topics where social desirability bias distorts human-moderated conversations. Participants consistently disclose more to AI moderators on sensitive topics. The absence of a human audience removes the performance element that causes people to sanitize their experiences.

This is not speculation. Organizations running parallel studies -- same questions, same populations, AI vs human moderator -- consistently find that AI-moderated conversations surface more candid responses on stigmatized topics. The research on anonymous AI interviews for community feedback demonstrates this effect in practice.

Where Human Moderators Remain Irreplaceable

Acknowledging AI strengths does not diminish the irreplaceable value of skilled human moderators. Certain research objectives require capabilities that AI cannot replicate -- and pretending otherwise produces shallow insights dressed in efficiency metrics.

Complex Emotional Rapport

When research involves grief, trauma, major life transitions, or deeply personal experiences, the moderator's ability to hold space matters. A skilled human moderator adjusts their pace, tone, and body language in response to micro-cues -- a slight hesitation, a change in breathing, eyes welling up. They know when to push gently and when to sit in silence.

AI can detect sentiment shifts in text. It cannot feel the weight of a room. For research where emotional depth is the objective -- not just a side effect -- human moderators produce fundamentally richer data.

Co-Creation and Generative Exercises

Design workshops, collaborative ideation, concept co-development -- these require the improvisational responsiveness of a human facilitator. When a participant says something unexpected and the entire direction of the conversation needs to pivot to explore that thread, human moderators do this instinctively. AI moderators, even adaptive ones, operate within the bounds of their configured logic.

Exploratory Research in Undefined Territory

When you genuinely do not know what you are looking for -- when the research objective is "understand what is happening in this space" rather than "validate hypothesis X" -- human moderators' ability to follow intuition and recognize emergent patterns in real time is unmatched. The serendipitous discovery that reframes an entire project does not come from systematic probing. It comes from a human noticing something strange and chasing it.

High-Stakes Stakeholder Dynamics

Executive interviews, board-level research, sensitive organizational studies -- contexts where the moderator's credibility, status, and interpersonal skill directly affect data access. A VP of Product will not give candid responses about organizational dysfunction to a chatbot. They will talk to a skilled researcher who demonstrates domain expertise and builds trust through human connection.

The Hybrid Model: Breadth Then Depth

The most effective research programs are not choosing between AI and human moderation. They are sequencing them strategically.

Phase 1: AI for Breadth and Pattern Identification

Run 30-80 AI-moderated interviews across your target population. Use consistent discussion guides that cover the full landscape of your research questions. Analyze at scale to identify segments, patterns, outliers, and emerging themes that warrant deeper exploration.

This phase does what would previously require 6-8 weeks of human moderation in 5-7 days. It gives you a map of the territory before you decide where to dig.

Phase 2: Human Moderators for Targeted Depth

Select 8-12 participants from Phase 1 who represent the most interesting patterns, surprising outliers, or segments where AI-generated data raised more questions than it answered. Conduct human-moderated deep dives with these participants, informed by what you already know from their AI interview.

The human moderator enters these conversations with context. They know which threads to pull, which responses seemed surface-level, where the real complexity lies. This makes the human-moderated sessions dramatically more productive than cold-start interviews.

Phase 3: Integrated Analysis

Synthesize findings across both phases. AI interview data provides the statistical confidence that patterns are real across the population. Human interview data provides the depth, nuance, and narrative that makes those patterns actionable. Together, they produce insights that neither method alone could generate.

This hybrid approach typically costs 40-60% less than an all-human study of equivalent insight quality, while producing richer segment-level data and faster timelines.

Industry Applications: Who Is Getting This Right

CPG: Concept Testing at Scale

Consumer packaged goods companies are using AI-moderated interviews to test 8-12 product concepts across 200+ consumers in a single week, then conducting human-moderated shop-alongs and in-home interviews with the 15 participants whose responses suggest the most complex purchase decision processes. The breadth data quantifies opportunity size. The depth data reveals the emotional and contextual drivers that concept scores alone cannot capture.

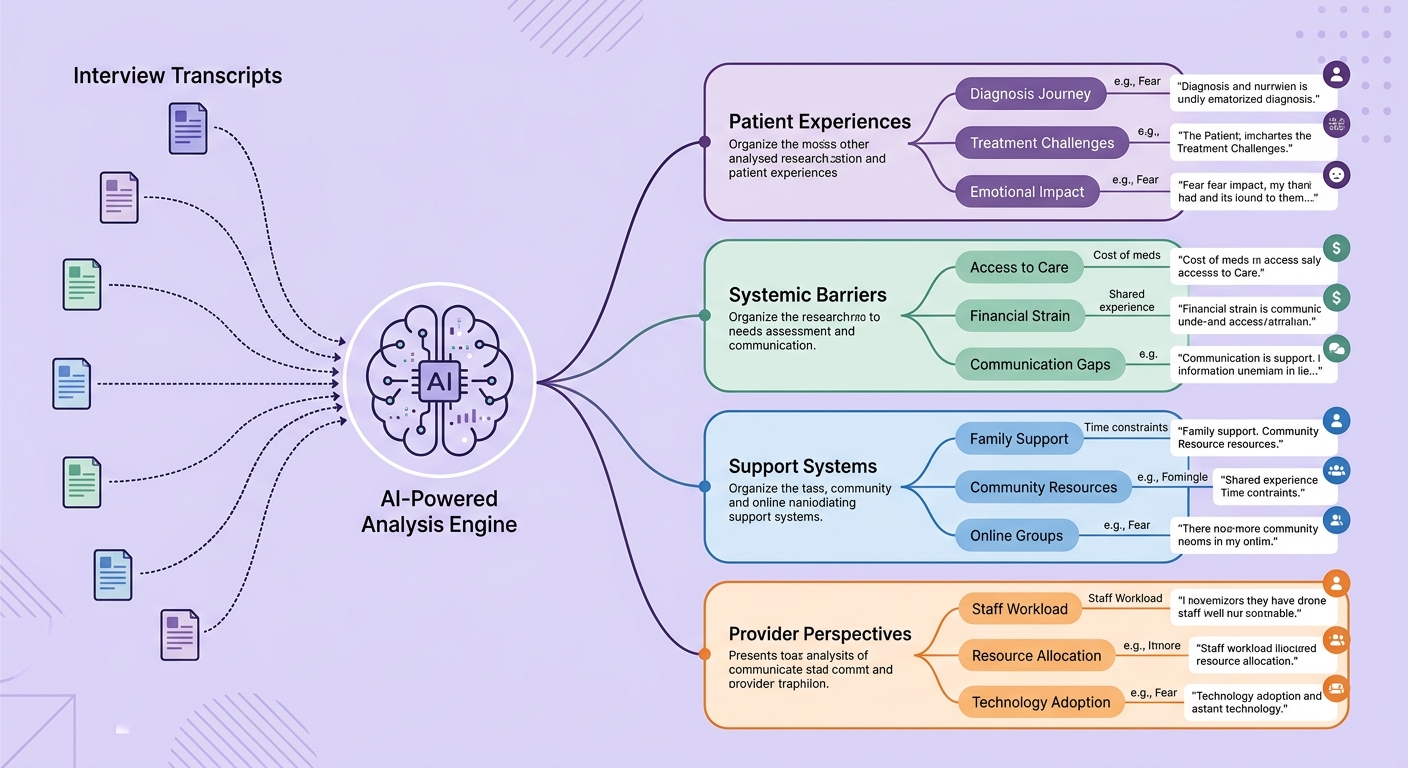

Healthcare: Patient Experience Research

Healthcare organizations face a specific challenge: patients who are too ill, too exhausted, or too anxious for synchronous interviews. AI-moderated async conversations let patients share their experiences in their own time, in their own words, without the pressure of a scheduled session. Human moderators then conduct follow-up sessions with patients whose AI interviews surfaced clinically significant experiences or unmet needs. The principles outlined in patient-reported outcomes via AI interviews show how this combination respects patient capacity while producing actionable data.

Technology: Continuous Discovery

Product teams at technology companies are embedding AI-moderated interviews into their continuous discovery cadence -- running 10-20 AI conversations per sprint cycle to maintain customer proximity. When AI interviews surface a surprising pattern or a potential opportunity, they escalate to human-moderated sessions for validation and depth. This creates a continuous discovery rhythm that does not depend on the research team's synchronous capacity.

A Practical Framework: Matching Method to Question

Use this decision framework to determine which modality fits your research question:

Use AI moderation when:

- Sample size exceeds 20 participants

- Questions are well-defined and you know what you are looking for

- Participants are geographically distributed or schedule-constrained

- Topic benefits from anonymity or reduced social pressure

- Consistency across conversations is critical for comparative analysis

- Timeline requires parallel rather than sequential data collection

Use human moderation when:

- Research is exploratory with undefined boundaries

- Emotional depth and rapport are central to data quality

- Participants require high-touch relationship building (executives, vulnerable populations)

- The research involves co-creation, design, or generative exercises

- You need real-time adaptation beyond discussion guide logic

- Fewer than 10 participants and each conversation must be maximally rich

Use the hybrid model when:

- You need both breadth (segment patterns) and depth (individual narratives)

- Budget constrains all-human studies at adequate sample sizes

- You want to identify the right participants for deep-dives before committing moderator time

- Research questions span both structured validation and open exploration

- You need fast initial findings with the option to go deeper on specific threads

Building Your Hybrid Research Capability

Implementing hybrid research requires more than access to both tools. It requires an analytical infrastructure that can synthesize across modalities -- connecting what 80 AI-moderated conversations revealed at scale with what 10 human-moderated sessions revealed in depth. A solid research repository becomes essential for tracking insights across both data types.

Qualz.ai's platform was designed for exactly this workflow: AI-moderated interviews that produce structured, analyzable data at scale, combined with analysis tools that help human researchers synthesize findings across both AI and human-moderated conversations. The goal is not to replace the human researcher's judgment -- it is to give them better raw material to work with, at volumes that were previously impossible.

The teams winning at consumer research in 2026 are not the ones who adopted AI fastest or the ones who resisted it longest. They are the ones who figured out the combination -- using each modality where it has a structural advantage, sequencing them for compounding insight, and building the infrastructure to synthesize across both.

The AI vs human debate was always the wrong question. The right question is: how do you build a research program that is greater than the sum of its parts?