The Agreement Illusion

Qualitative research has an uncomfortable relationship with reliability. We calculate Cohen's kappa, report inter-rater agreement percentages, and treat high concordance as evidence of coding quality. But what does 85% agreement actually mean? It means that trained researchers, working from the same codebook, looking at the same data, disagree on roughly one in seven coding decisions. And the 85% they agree on may represent the easy, obvious codes — the ones that barely need human judgment at all.

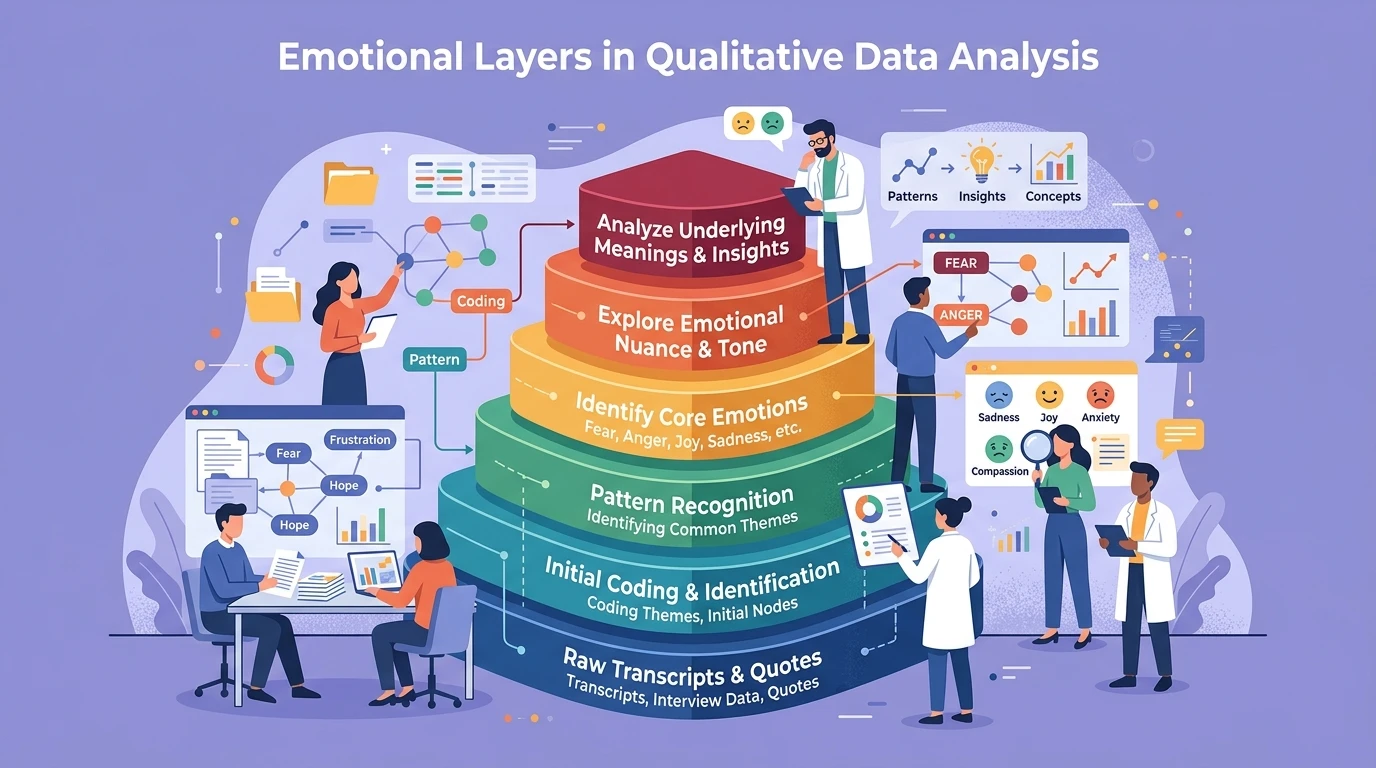

Interpretation drift is not a bug in qualitative analysis. It is a feature of working with data that is genuinely ambiguous, contextually dependent, and semantically rich. The participant who says "I guess it is fine" could be expressing genuine satisfaction, resigned acceptance, social desirability pressure, or cognitive fatigue. Two competent coders applying the same framework will sometimes reach different conclusions because the data legitimately supports multiple interpretations.

Why Drift Happens

Contextual anchoring divergence. Each coder builds a running mental model of the participant as they move through a transcript. If coder A read an earlier passage as frustrated, they interpret subsequent ambiguous statements through a frustration lens. Coder B, who read the same passage as contemplative, carries a different interpretive frame forward. By paragraph ten, they are coding the same words from fundamentally different contextual positions.

This mirrors the broader challenge of how anchoring effects contaminate research findings — except here, the researchers themselves are being anchored by their own prior interpretations within the same dataset.

Codebook elasticity. Written code definitions seem precise until you apply them to real data. "Expresses frustration with workflow" — does a heavy sigh count? What about sarcasm? What about a participant describing frustration they felt last month but no longer feel? Every codebook has boundary cases that the definitions do not resolve, and each coder resolves these boundaries slightly differently based on their training, theoretical orientation, and personal experience.

Fatigue-driven threshold shift. Coding is cognitively demanding work. After three hours, coders become either more liberal (coding more passages to finish faster) or more conservative (requiring stronger evidence as their threshold for "clear enough" rises). Two coders experiencing fatigue differently will drift apart not because they disagree on interpretation but because their decision thresholds have shifted at different rates. This connects directly to what we know about research fatigue degrading analytical sharpness.

Theoretical gravity. Coders do not approach data as blank slates. A coder trained in phenomenology attends to experiential descriptions. A coder trained in grounded theory looks for process and action. A coder with UX background foregrounds usability signals. These theoretical orientations act as gravitational fields that pull ambiguous data toward different interpretive centers.

The Problem With Forcing Agreement

Standard practice says: when coders disagree, discuss until consensus. This sounds rigorous but often produces artificial agreement. The more senior researcher's interpretation wins. The more articulate argument wins. The interpretation that fits the emerging narrative wins. Consensus meetings do not resolve genuine ambiguity — they suppress it by choosing one reading and discarding others.

Worse, forced agreement teaches coders to converge preemptively. After several consensus discussions that reliably favor certain interpretive styles, coders adjust their independent coding to match anticipated group preferences. Your inter-rater reliability improves, but not because coding quality improved — because independent judgment decreased.

The field of AI-assisted qualitative analysis is attempting to address this by providing consistent baseline coding that human researchers can then interrogate. But AI coding introduces its own interpretation drift — one that is consistent but potentially systematically biased in ways that human coders are not.

Drift as Data

Here is the counterintuitive insight: disagreement between coders is often your most valuable analytical signal. When two trained researchers look at the same passage and see different things, that passage is doing something interesting. It is semantically dense, contextually ambiguous, or emotionally layered in ways that deserve deeper attention.

Instead of resolving disagreements, map them:

- Where do disagreements cluster? If coders consistently disagree on the same types of passages, those passage types represent genuine interpretive complexity in your phenomenon.

- What patterns emerge in who disagrees how? If coder A consistently interprets hedging language as uncertainty while coder B reads it as politeness, you have identified a dimension of your data that your codebook does not adequately capture.

- Do disagreements concentrate around specific participants? Some participants communicate in more ambiguous ways. This itself is a finding about how those participants relate to the topic — as explored in work on detecting contradictions in qualitative interviews.

Practical Approaches to Working With Drift

Calibration sessions with explicit boundary cases. Do not just train coders on clear examples. Deliberately select ambiguous passages and have coders explain their reasoning. The goal is not agreement but transparency about interpretive logic. When you understand why coders diverge, you understand your data better.

Drift documentation as methodology. Report interpretation drift as a methodological finding, not a limitation. "Coders diverged most frequently on passages where participants described workarounds, suggesting that the boundary between adaptation and frustration is phenomenologically blurry in this context." This is a richer contribution than "inter-rater reliability was .82."

Multi-interpretation coding. Allow passages to carry multiple codes from different coders without forcing resolution. Analyze patterns in which codes co-occur across coders. A passage coded as both "resignation" and "pragmatism" by different coders reveals something about the lived experience that neither single code captures.

Temporal drift tracking. Code the same subset at the beginning and end of your analysis period. Measure how each coder's own interpretations shift over time as they internalize the dataset. This intra-coder drift is often larger than inter-coder disagreement and reveals how immersion in data changes interpretive frameworks.

As AI governance frameworks demonstrate, the most robust systems build in multiple validation perspectives rather than forcing single-point-of-truth interpretations. The same principle applies to qualitative analysis.

Implications for Research Credibility

Acknowledging interpretation drift does not weaken your research — it strengthens it by demonstrating methodological sophistication. Reviewers and stakeholders who understand qualitative epistemology know that forced agreement is less credible than documented, analyzed disagreement. The team that reports "our coders diverged on these specific dimensions, which led us to refine our understanding of X" demonstrates more analytical depth than the team that simply reports a kappa statistic.

For teams building research repositories that persist beyond individual projects, documenting interpretive drift preserves the intellectual context that future researchers need to understand why codes were applied the way they were — and where alternative readings remain legitimate.

The Bottom Line

Interpretation drift is not a problem to solve. It is a property of working with data that is genuinely complex. Two researchers will never code the same data identically because qualitative data does not have a single correct reading. The goal is not elimination of drift but systematic engagement with it — using disagreement as an analytical lens rather than a quality metric to minimize. Your inter-rater reliability number tells reviewers you followed protocol. Your drift analysis tells them you understood your data.