The One-Size-Fits-All Interview Problem

Most interview guides assume a generic participant: someone who will answer honestly, elaborate when prompted, and stay on topic with minimal redirection. In reality, participants bring distinct behavioral patterns into every research session. Some over-perform, offering elaborate narratives about every feature interaction. Others under-perform, giving monosyllabic answers that force the researcher to carry the conversation. Still others perform strategically, crafting responses designed to influence product direction.

These are not random variations. They are predictable archetypes shaped by personality, organizational role, interview experience, and relationship to the product being discussed. Researchers who recognize these patterns in real time and adapt their technique accordingly extract significantly richer data than those who mechanically follow a guide regardless of who is sitting across from them.

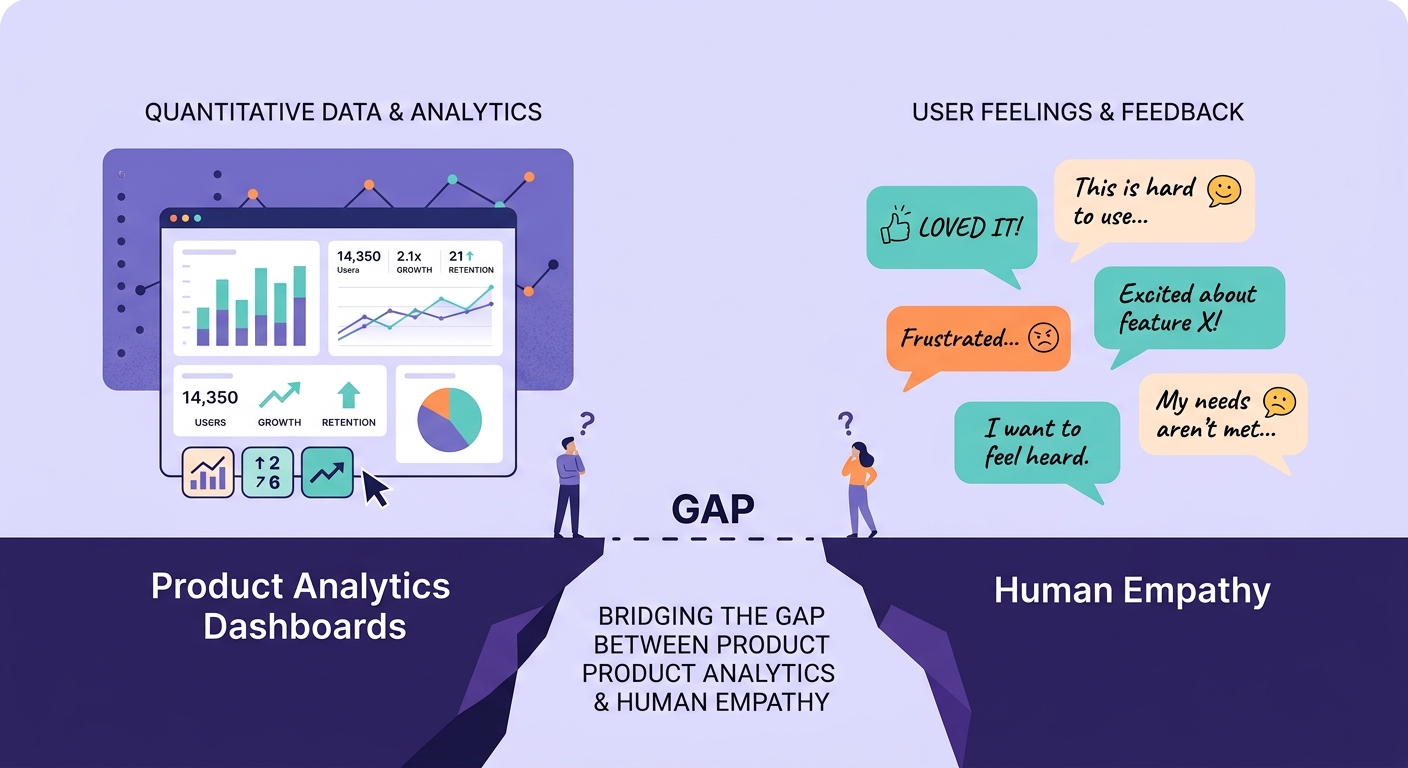

The challenge is that most research training focuses on question design and analysis -- not on real-time participant assessment and adaptive moderation. This gap between methodology and practice is where data quality silently degrades.

The Five Response Archetypes

Through hundreds of qualitative interviews and analysis of response patterns in AI evaluation-driven contexts, consistent archetypes emerge:

The Pleaser. This participant wants to be helpful and fears giving "wrong" answers. They agree with implied positions, provide socially desirable responses, and mirror the energy and direction they perceive from the interviewer. Their data is systematically biased toward confirmation of whatever hypothesis seems to underpin the questions.

Adaptation strategy: Remove all evaluative language from your questions. Instead of "How useful did you find this feature?" ask "Walk me through the last time you encountered this feature." The Pleaser cannot fabricate a specific behavioral narrative as easily as they can offer a positive evaluation.

The Expert. This participant positions themselves as unusually knowledgeable, often providing prescriptive solutions rather than describing their actual experience. They speak in generalizations ("what users need is...") rather than specifics ("what happened was..."). Their data conflates personal experience with aspirational product design.

Adaptation strategy: Anchor every question to concrete, recent events. "You mentioned you are an advanced user -- tell me about the last time something surprised you or did not work as expected." The Expert self-image makes them resistant to admitting confusion, so frame difficulties as product failures rather than user failures.

The Deflector. This participant avoids direct answers, redirects to tangential topics, or gives abstract responses that never quite address the question. They are not being deliberately unhelpful -- they often have organizational or political reasons for avoiding specificity, or they genuinely have not reflected on the topic before.

Adaptation strategy: Use the contextual inquiry approach -- ask them to show rather than tell. "Can you share your screen and walk me through what you did yesterday?" Deflectors struggle less with narrating observable behavior than with articulating opinions or evaluations.

The Narrator. This participant provides rich, detailed stories but struggles with conciseness. Every question generates a five-minute answer that includes extensive context, historical background, and tangential anecdotes. The data is valuable but buried in noise, and the interview risks running long before covering all topics.

Adaptation strategy: Let them narrate fully for the first two questions to build rapport, then introduce gentle time-boxing. "That is really helpful context -- in the interest of time, can you give me the 30-second version of what happened next?" Narrators respond well to explicit structural cues because they want to be good participants.

The Strategist. This participant has an agenda. They want a specific feature built, a specific problem prioritized, or a specific competitor acknowledged. Their responses consistently steer toward their desired outcome regardless of what is being asked. They are often power users or stakeholders with political capital.

Adaptation strategy: Acknowledge their advocacy explicitly, then redirect. "I can see this feature is important to you -- I have noted that. Now I want to understand your broader workflow beyond that specific capability." The Strategist respects direct communication and will often provide excellent data once they feel heard.

Why Archetype Recognition Matters for Data Quality

The risk of ignoring archetypes is not just suboptimal interviews -- it is systematic bias in your dataset. If you have five Pleasers in a ten-person study, your positive signal is inflated. If your enterprise segment is dominated by Experts, you are getting prescriptive input disguised as experiential data.

This connects directly to the research panel fatigue problem. Repeat participants often calcify into specific archetypes over time. A participant who started as a genuine Narrator may become a Strategist after multiple sessions because they have learned that researchers influence product direction.

Teams doing research democratization face this challenge acutely. Non-researcher interviewers typically cannot identify archetypes in real time, which means their data is more susceptible to archetype-driven distortion. AI-assisted moderation can help by detecting response patterns and suggesting adaptive follow-up questions.

Building Archetype Awareness Into Your Process

Pre-interview signals. Screening responses often telegraph archetypes. Participants who write lengthy, detailed screener answers are likely Narrators. Those who give minimal screener responses may be Deflectors. Participants who volunteer unsolicited product feedback in screening are often Strategists. Using these signals does not mean excluding anyone -- it means preparing adaptive strategies before the session starts.

First-question calibration. Your opening question serves double duty: it builds rapport AND reveals the participant default communication style. Ask one broad, open question and observe: Do they give a 30-second answer or a 5-minute one? Do they stay experiential or go abstract? Do they seek validation ("if that makes sense?") or assert authority?

Cross-archetype analysis. When synthesizing data, segment by archetype as a validity check. If an insight only appears in Pleaser interviews, it may be confirmation bias rather than signal. If it appears across archetypes -- especially if Deflectors and Strategists both surface it unprompted -- confidence is much higher.

The screener design principles that prevent recruitment failures can be extended to include archetype prediction. Adding one open-ended screener question and scoring the response style gives you advance intelligence about your participant mix.

Adapting Without Manipulating

A critical ethical boundary: adapting your technique to participant archetypes means meeting people where they are, not manipulating them into giving you the answers you want. The goal is to create conditions where each archetype can provide their most authentic, detailed, and honest response -- not to bypass their natural communication preferences.

This means accepting that a Deflector indirect answers may be the most honest data they can provide given their constraints. It means recognizing that a Narrator tangents often contain unexpected insights that a tightly controlled interview would miss. And it means understanding that a Pleaser confirmatory responses, while methodologically problematic, reflect a real interpersonal dynamic that exists in their product usage too.

The best qualitative researchers are not those who get every participant to perform identically. They are those who recognize the variety of human communication, adapt fluidly, and interpret their data with archetype awareness baked into the analysis.

Treating every participant the same is not rigor. It is rigidity. And it is costing your team insights every single session.