Your Interview Guide Is Biasing Your Data

Every qualitative researcher knows that how you ask a question matters. Far fewer recognize that when you ask it matters just as much. The question order effect -- a well-documented phenomenon in survey research -- operates with equal force in qualitative interviews, yet most interview guides are structured around logical topic flow rather than data integrity.

The result is systematic bias hiding in plain sight. Teams run twenty interviews with the same guide, see consistent patterns in their data, and conclude they have found a real signal. But what they have actually found is an artifact of sequence: participants primed by early questions to think in particular frames, anchored to reference points established in the first five minutes, and cognitively depleted by the time they reach the questions that matter most.

This is not a minor methodological footnote. Research on cognitive load in survey design demonstrates that sequence effects can shift response patterns by 20-40 percent depending on topic sensitivity. In qualitative interviews, where responses are richer and more elaborated, the distortion compounds.

How Sequence Priming Works in Interviews

Sequence priming operates through a simple mechanism: earlier questions activate specific mental models, memories, and evaluative frames that then color everything that follows.

Context framing. If you open an interview by asking about frustrations with a product, you have activated a critical evaluation frame. Every subsequent question -- even neutral ones about workflow or preferences -- will be filtered through that lens. The participant is now in "complaint mode" and will surface negative experiences more readily than positive ones.

Anchoring effects. When you ask participants to rate satisfaction early, that number becomes an anchor. If they said "7 out of 10," subsequent qualitative responses will unconsciously calibrate to justify that rating. They will downplay serious frustrations (because a 7 means things are mostly fine) or amplify minor ones (because they need to explain why it was not a 9).

Memory activation patterns. Questions activate specific memory networks. Ask about onboarding first, and the participant retrieves early-stage memories that color their perception of current usage. Ask about current daily workflow first, and onboarding memories will be retrieved through the filter of present-day expertise -- making early struggles seem less significant than they actually were.

The teams using AI adaptive interviews have an advantage here because algorithmic question routing can randomize or optimize sequence based on real-time engagement signals rather than following a fixed guide.

The Fatigue Gradient Problem

Beyond priming, question order creates a fatigue gradient that systematically privileges early topics over later ones.

In a 45-minute interview, participant engagement typically follows a predictable curve: high attention for the first 10-15 minutes, stable but declining attention through minute 30, and noticeably degraded responses after that. This means questions placed at the end of your guide consistently receive less thoughtful, less detailed, and less honest answers.

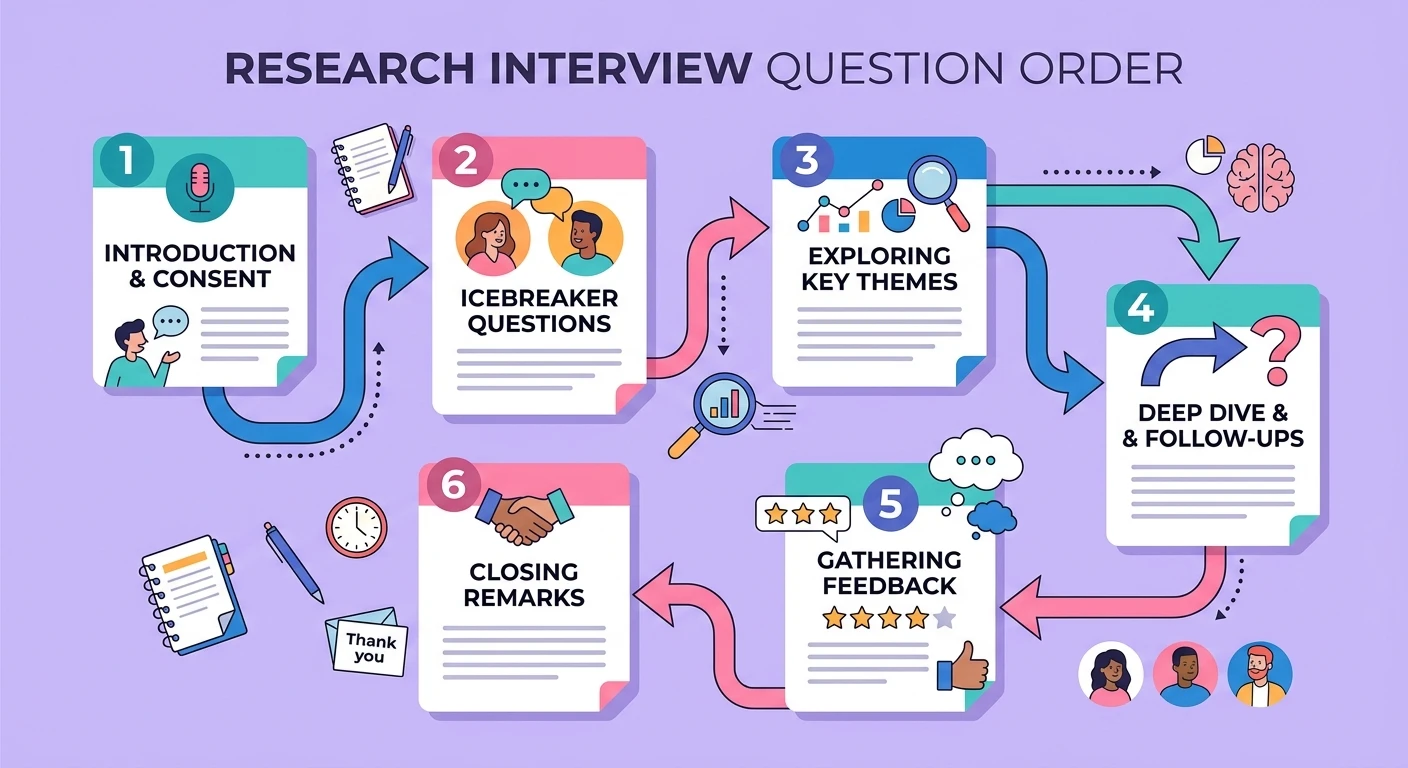

Most teams structure their guides with rapport-building questions first, core research questions in the middle, and exploratory or secondary questions at the end. The problem is that "secondary" questions often contain the most strategically valuable territory -- questions about unmet needs, competitive alternatives, or willingness to change. These get the worst data because they sit in the fatigue zone.

The participant fatigue problem is well understood in the context of research engagement, but its interaction with question ordering creates a compound effect that few teams account for in their analysis.

Practical Mitigation Strategies

Rotate your guide. The simplest fix is to create 3-4 versions of your interview guide with different topic orderings and randomly assign participants. This does not eliminate sequence effects but distributes them across your dataset so they do not systematically bias one topic.

Front-load your most important questions. Reverse the common pattern. Put your highest-priority research questions in the first 15 minutes when attention is highest and priming effects are minimal. Use rapport-building as a brief warm-up, not a 10-minute preamble.

Use the funnel-then-expand pattern. Start with broad, open questions that let participants establish their own frame before introducing specific topics. "Tell me about your typical day" is a better opener than "How do you use [product]?" because it allows the participant to define what is salient rather than accepting your framing.

Mark sequence artifacts in analysis. When coding transcripts, note where a response was clearly influenced by a prior question. Statements like "going back to what I said earlier" or "this relates to what you asked before" are explicit markers of sequence contamination. The principles of detecting contradictions in qualitative interviews apply here -- contradictions between early and late responses often signal sequence effects rather than genuine ambivalence.

Counterbalance sensitive topics. For topics where social desirability is high, ensure they appear at different positions across your participant pool. A question about budget constraints asked after discussing product satisfaction will get different answers than the same question asked cold.

Why AI-Assisted Research Has an Advantage

Traditional interview guides are static documents. Once written, every participant gets the same sequence regardless of how they respond. This makes sequence effects consistent and therefore invisible -- they look like real patterns because they appear across all interviews.

AI-assisted research platforms can dynamically adjust question ordering based on participant responses, engagement signals, and research priorities. This does not eliminate sequence effects, but it makes them variable across participants rather than constant -- which means they add noise rather than systematic bias.

The shift from static guides to adaptive conversation flows represents a fundamental methodological advance. It is the qualitative equivalent of randomized controlled trial design: you cannot eliminate confounds, but you can ensure they do not all point in the same direction.

For teams building research repositories that teams actually use, accounting for question order effects in metadata makes cross-study comparison more reliable. Tagging which guide version was used, and where in the sequence a particular insight was elicited, gives future researchers the context they need to assess data quality.

The Sequence Audit

Before your next research project, run a sequence audit on your interview guide:

- Identify which questions prime specific frames (critical, positive, comparative)

- Map the fatigue gradient -- which questions sit in the degraded-attention zone?

- Check for anchoring -- do any early questions establish numerical or categorical reference points?

- Look for leading sequences -- does question 4 make question 5 answer predictable?

- Create at least two guide variants with different topic orderings

The goal is not to eliminate sequence effects -- that is impossible in sequential conversation. The goal is to make them visible, variable, and accounted for in your analysis. Teams who treat question order as a methodological variable rather than a convenience choice consistently produce more reliable qualitative data.

The difference between good research and great research is rarely about asking better questions. It is about understanding how the structure of your inquiry shapes what you are able to hear.