The Problem You Cannot See

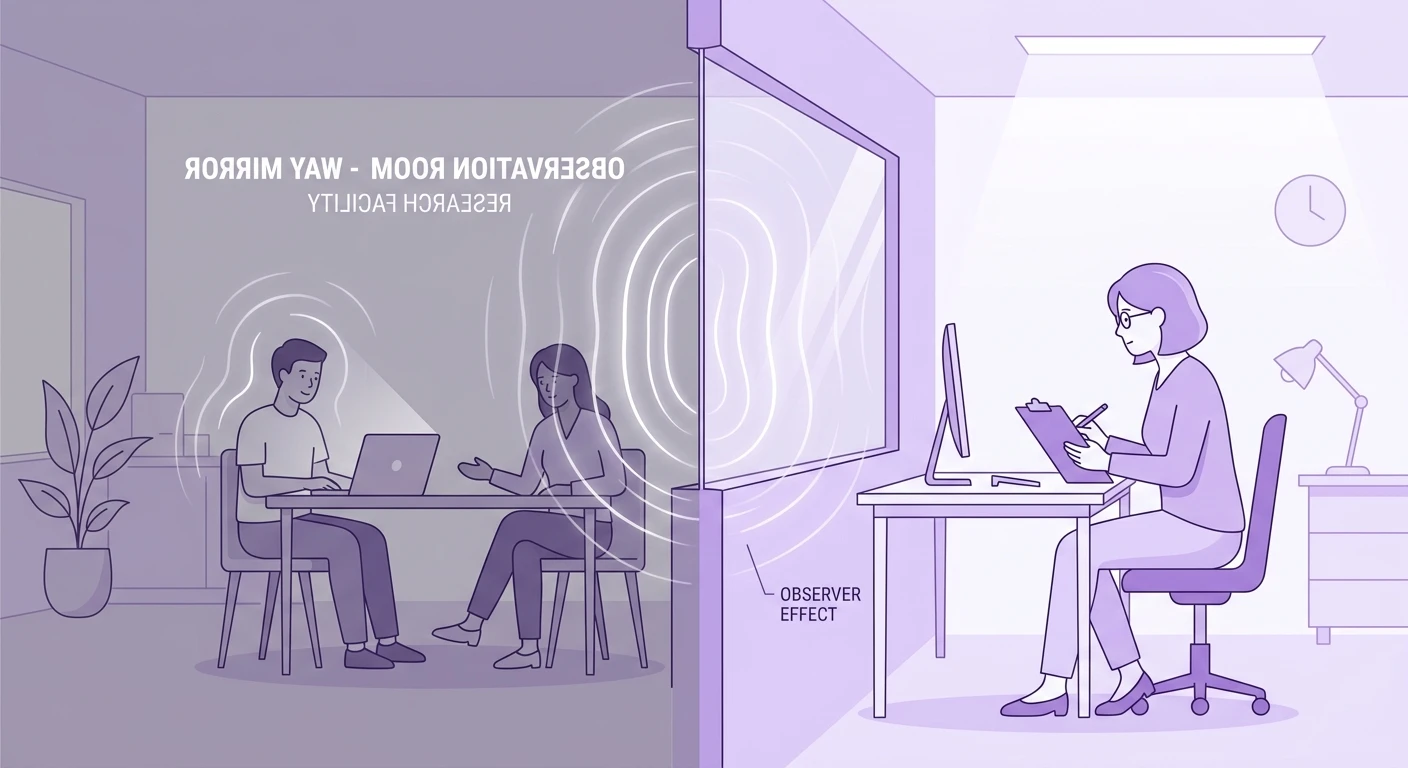

Every usability test has an uninvited guest: the awareness of being observed. When a participant knows someone is watching -- whether through a one-way mirror, a screen share, or simply the presence of a note-taking researcher -- their behavior shifts in predictable but often invisible ways.

This is the observer effect, borrowed from physics but devastatingly relevant to UX research. The act of measurement changes the thing being measured. And unlike physics, we cannot solve it with better instruments. We solve it with better methodology.

The consequences are not trivial. Teams that ignore the observer effect build products optimized for performance-mode users rather than natural-mode users. The gap between what people do in a study and what they do at home is where failed launches live.

How Observation Distorts Behavior

The observer effect manifests in several predictable patterns:

Social desirability bias. Participants complete tasks they would normally abandon. They suppress frustration. They avoid behaviors they perceive as "wrong" -- like using the back button repeatedly or reading help documentation.

Performance anxiety. Users move more slowly, read more carefully, and second-guess choices they would make instinctively in private. The research session becomes a test rather than a usage scenario.

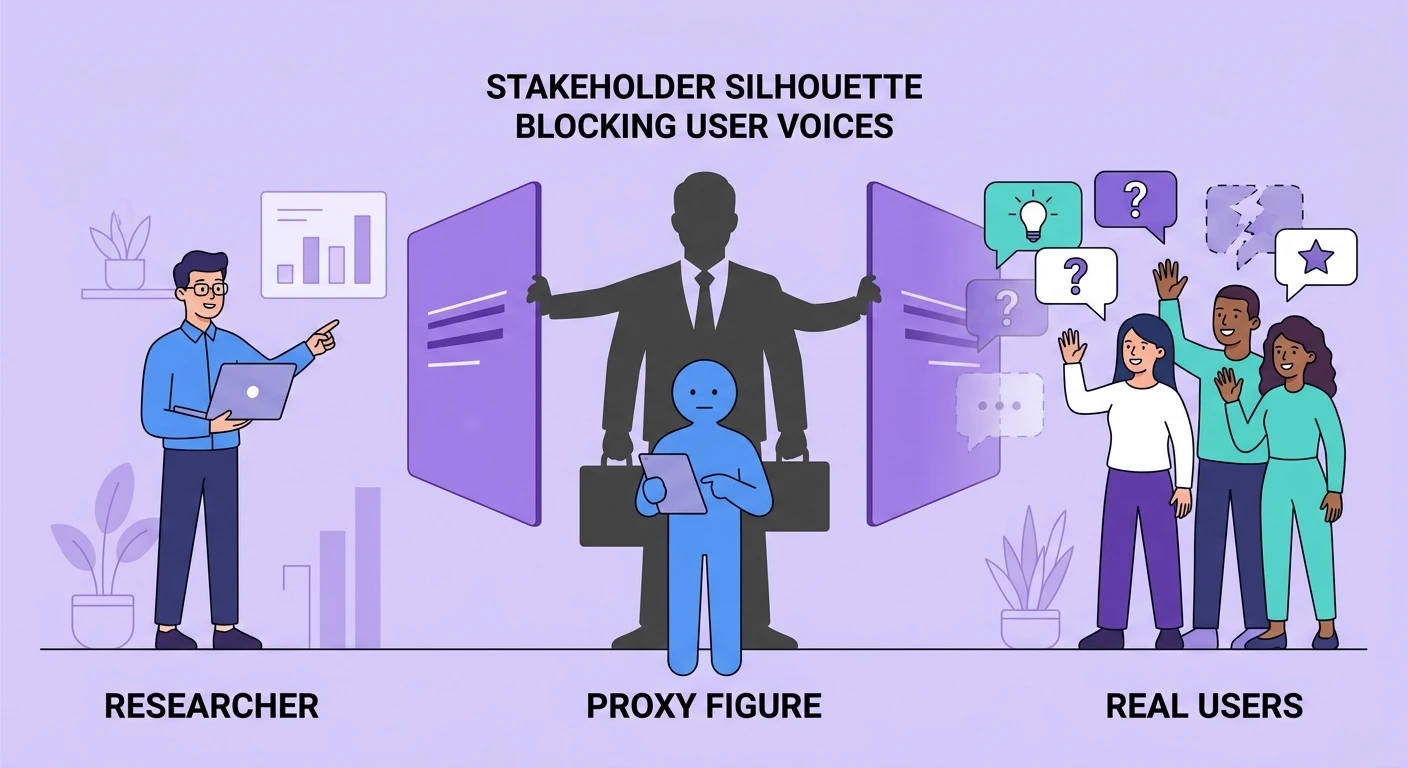

Demand characteristics. Participants try to figure out what the researcher wants to see, then deliver it. This is not deception -- it is human social cognition operating exactly as designed.

Reduced exploration. In unobserved usage, people click randomly, explore menus out of curiosity, make errors and recover. Under observation, they stay on the "golden path" because deviation feels risky.

These distortions compound. A team that runs 10 usability tests without accounting for the observer effect does not have 10 data points. They have 10 measurements of a different construct: how users perform under observation, not how they actually use the product.

Why Traditional Mitigation Fails

The standard advice -- "make participants comfortable" and "remind them it is not a test" -- addresses surface anxiety without touching the deeper social dynamics. You cannot eliminate the observer effect through rapport alone, any more than you can eliminate gravity through friendliness.

Even AI-moderated interviews do not fully eliminate the effect. While removing a human observer reduces social desirability pressure, participants still know their responses are being recorded and analyzed. The awareness of future judgment persists.

The honest methodological position is that you cannot eliminate the observer effect. You can only understand its direction, estimate its magnitude, and triangulate around it.

Strategies That Actually Work

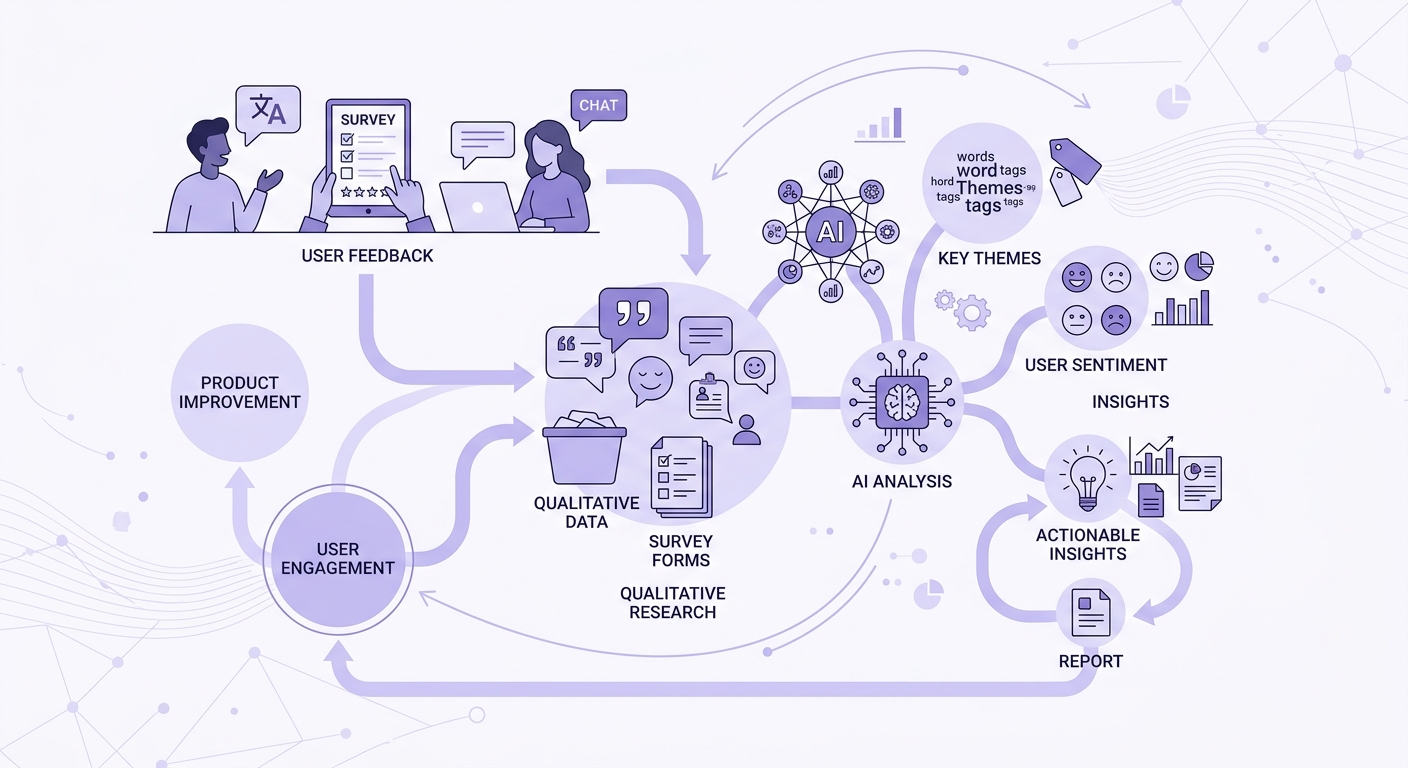

Triangulate with unobserved data. Combine research sessions with analytics, session recordings (where users have consented but forgotten), and support tickets. The gap between observed and unobserved behavior IS your finding -- it tells you what users suppress.

Use longitudinal methods. Diary studies reduce the observer effect because the observation becomes routine. By day five of a two-week diary study, participants stop performing. The early entries show you the effect; the late entries show you reality.

Leverage asynchronous research. When participants respond on their own time, in their own context, without a live audience, the observer effect diminishes substantially. This is why asynchronous research methods often produce more naturalistic data than synchronous sessions.

Build in warm-up tasks. The first task in any session absorbs the worst of performance anxiety. Design your study so task one is a throwaway -- something easy that lets participants settle into natural behavior before you start collecting data that matters.

Separate observation from evaluation. Frame the session explicitly: "We are testing the product, not you. Every difficulty you encounter is a design failure, not a user failure." This does not eliminate the effect, but it redirects the performance pressure away from the participant and toward the product.

The Paradox of Remote Research

Remote unmoderated testing was supposed to solve the observer effect. Participants at home, no researcher present, natural environment. But the data tells a more complex story.

Remote participants know they are being recorded. They know someone will watch the video. In some ways, the imagined observer is worse than the real one -- because participants cannot calibrate their performance to social cues. They cannot tell if the researcher is bored, satisfied, or has stopped watching.

The result is often hyper-articulate think-aloud behavior that sounds nothing like natural cognition. Users narrate their experience for an imagined audience rather than simply experiencing it.

The lesson: remote testing changes the observer effect rather than eliminating it. Account for this when interpreting remote research data.

Designing for the Effect

The most sophisticated approach is not to eliminate the observer effect but to design your research program around it:

- Know when it matters. For discovery research ("what do users need?"), the observer effect is highly distorting. For evaluative research ("can users complete this flow?"), it matters less -- even observed users will fail at genuinely broken interfaces.

- Quantify the gap. Compare analytics data with research data for the same flows. The delta is your observer effect magnitude. If research shows 90% task completion but analytics shows 60%, you know exactly how much observation is flattering your product.

- Use it as signal. What users suppress under observation often reveals their mental model of "correct" behavior. That mental model IS useful data -- it tells you about expectations, learned conventions, and perceived social norms around technology use.

As research triangulation principles suggest, no single method gives you ground truth. The observer effect is not a bug in qualitative research -- it is a reminder that human behavior is context-dependent, and research contexts are contexts too.

Implications for AI-Powered Research

AI research tools introduce a new wrinkle. When an AI conducts the interview, participants may be more honest about certain topics (reducing social desirability bias) but more performative about others (because they are aware the conversation is being analyzed computationally).

The teams building production AI systems for research need to account for how the presence of AI changes participant behavior. Early evidence suggests that AI interviewers reduce but do not eliminate the observer effect -- they shift it from social performance to computational performance.

The Bottom Line

The observer effect is not a flaw in your research methodology. It is a fundamental property of studying conscious beings who know they are being studied. The researchers who produce the best data are not those who pretend it does not exist -- they are those who design their programs to account for it, triangulate around it, and use the gap between observed and natural behavior as insight rather than noise.

Stop trying to make your research sessions feel "natural." They are not natural. They are research. Design accordingly.

*Want to combine AI-powered interviews with analytics triangulation to get past the observer effect? Book a demo to see how Qualz helps you build multi-method research programs.*