The Translation Trap

You have a well-tested interview guide. It works beautifully in English with US participants. Your stakeholders want to expand the study to Japan, Brazil, and Germany. The obvious move: send the guide to a translation agency, get it back in three languages, and start recruiting.

This is how most global research projects destroy their own data before a single session begins.

The problem is not linguistic accuracy. Modern translation services are excellent at converting words between languages. The problem is that questions carry cultural assumptions baked into their structure, their framing, and the probing patterns that follow them. When you translate the words but not the cultural logic, you get responses that look like data but measure something entirely different from what you intended.

A US-designed question like "Tell me about a time you complained to customer service" assumes complaining is a normal, socially acceptable behavior. In Japan, where direct confrontation with service providers carries significant social friction, this question does not just translate poorly. It actively filters out the majority of meaningful experiences your participants have had. You end up with a skewed sample of unusually assertive Japanese consumers, and your stakeholders walk away thinking Japanese users rarely have service problems.

They have plenty of problems. Your question just could not find them.

Why Probing Styles Must Adapt

The interview guide is only half the equation. How a moderator probes, follows up, and builds conversational momentum varies dramatically across communication cultures.

Edward Hall's high-context and low-context framework remains useful here, even if oversimplified. In low-context cultures like the US, Netherlands, or Germany, effective probing is direct: "Can you tell me more about that?" "What specifically frustrated you?" "Why did you choose that option?" Participants expect pointed questions and respond with explicit statements.

In high-context cultures like Japan, Korea, China, or much of the Middle East, the same direct probing creates discomfort. Participants may give shorter answers, agree with whatever framing the moderator implies, or provide socially desirable responses rather than authentic ones. Effective probing in these contexts is often indirect: asking about what "people generally" do rather than what the participant personally does, using hypothetical scenarios, or allowing longer silences that Western moderators instinctively rush to fill.

Consider this example. A US moderator asks a Korean participant: "What did you dislike about the onboarding flow?" The participant says it was fine. The moderator probes: "Nothing at all? Even small things?" The participant mentions one minor issue politely.

Contrast this with a culturally adapted approach: "If a friend was using this app for the first time, what advice would you give them?" The same Korean participant now describes three significant pain points in detail, framed as helpful advice rather than personal criticism. Same person, radically different data quality.

This is not about participants being dishonest. It is about designing interviews that account for how people naturally communicate in their cultural context.

The Five Dimensions of Cultural Question Framing

After running cross-cultural research programs across 30+ markets, a pattern emerges. Five dimensions consistently determine whether a translated question will produce valid data or noise.

1. Directness of Inquiry

Some cultures reward directness. Others treat it as rude or confrontational. Your questions need to match the participant's expected communication register.

- Direct cultures (US, Germany, Israel): "What is your biggest frustration with X?"

- Indirect cultures (Japan, Thailand, Mexico): "How do people in your circle typically handle challenges with X?"

2. Individual vs Collective Framing

Western research defaults to individual experience. Many cultures process decisions and experiences collectively.

- Individual framing: "Why did you choose this product?"

- Collective framing: "How did your family or household decide on this product?"

In India, purchasing decisions for consumer electronics often involve extended family input. Asking "why did you choose" implies a solo decision that may never have happened.

3. Relationship to Authority

In cultures with high power distance, participants may defer to the moderator as an authority figure. Questions framed as expert-to-layperson create compliance rather than candor.

- High power distance adjustment: Position the participant as the expert. "You know your daily routine better than anyone. Walk me through how this fits into your day."

4. Temporal Orientation

Not all cultures relate to time the same way. US participants readily discuss future intentions and hypothetical scenarios. Participants in cultures with more present or past orientation may find speculative questions confusing or meaningless.

- Future-oriented: "How would you ideally want this to work?"

- Present-oriented: "What matters most to you about how this works right now?"

5. Emotional Expression Norms

Some cultures openly express frustration, delight, or confusion. Others maintain emotional restraint in formal settings like research interviews. Your probing must calibrate accordingly.

Moderators who are not attuned to these norms often misread neutral or restrained responses as indifference, when the participant actually holds strong opinions they are expressing through subtle cues. This is one of the most common forms of moderator bias in cross-cultural research.

A Practical Framework for Cultural Adaptation

Direct translation is a pipeline. Cultural adaptation is a process. Here is a framework that works.

Step 1: Cultural Desk Research

Before touching the interview guide, spend time understanding communication norms in the target market. Academic sources like Hofstede's dimensions and Erin Meyer's Culture Map provide starting frameworks, but supplement with local practitioner input.

Step 2: Reconstruct, Do Not Translate

Take each question and ask: what insight am I trying to generate? Then build a new question that generates that insight within the target culture's communication norms. This often means the translated guide looks structurally different from the original, and that is correct.

Step 3: Local Moderator Calibration

Brief local moderators on the research objectives, not the specific question wordings. Give them latitude to probe in culturally appropriate ways. The best cross-cultural data comes from moderators who understand the research goals deeply enough to adapt their approach in real time.

Step 4: Back-Translation Validation

Have the adapted guide translated back to the original language by someone who was not involved in the initial adaptation. Compare the back-translation to the original. Discrepancies often reveal cultural assumptions worth discussing.

Step 5: Pilot and Iterate

Run 2-3 pilot sessions per market before launching. Listen specifically for signs of social desirability bias, confusion, or thin responses that suggest the question framing is not working.

Where AI Changes the Game

Manual cultural adaptation is expensive and slow. It requires local expertise for every market, extensive piloting, and careful moderator training. This is one reason many teams skip it and default to translation, accepting the data quality hit for speed.

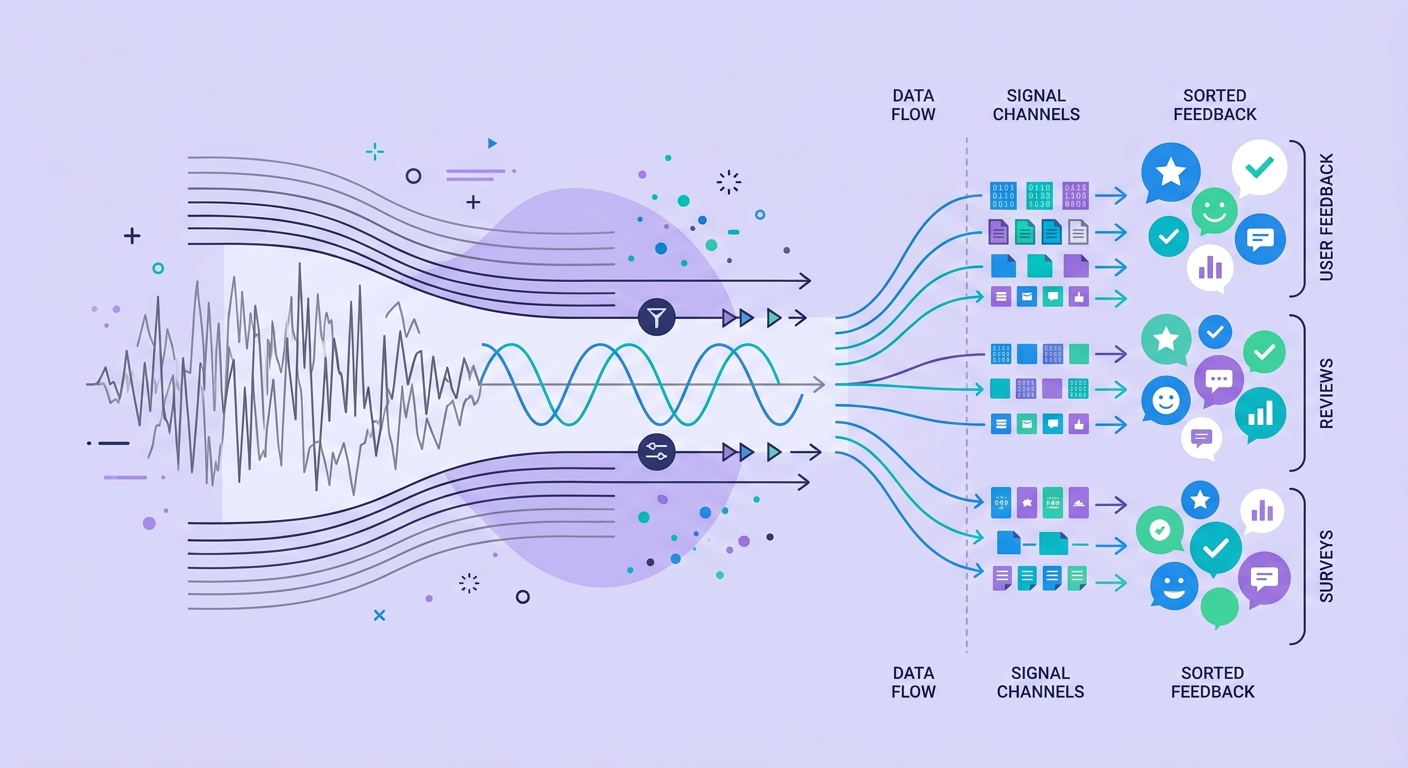

AI-moderated interviews are beginning to change this calculation. An AI moderator with proper cultural calibration can adjust probing styles dynamically based on the participant's communication patterns. It can detect when a participant is giving socially desirable responses and shift to indirect questioning. It can maintain the patience for longer silences that human moderators from direct cultures struggle with.

The key phrase is "proper cultural calibration." An AI system that simply translates an English interview guide into Japanese and asks the questions in order is just automated bad research. But systems built with AI memory architectures that retain cultural context across sessions can learn and adapt their approach for each market over time.

This is not theoretical. Teams running AI-moderated interviews across multiple markets are seeing data quality improvements specifically because the AI does not carry the cultural biases of a human moderator from a different culture. An AI moderator does not get impatient with silences. It does not unconsciously signal approval or disapproval through facial expressions. It does not default to its own culture's probing patterns when the conversation gets difficult.

But this advantage only holds if the AI system is deliberately designed for cultural adaptation rather than just multilingual operation. The distinction matters enormously, and it mirrors the broader deployment paradox of scaling AI across diverse contexts without losing effectiveness.

What Good Looks Like

Teams that do cross-cultural research well share a few characteristics:

- They budget 30-40% more time for cultural adaptation than for translation alone

- They treat the interview guide as a set of research objectives rather than a fixed script

- They hire local researchers as collaborators, not just translators

- They expect their adapted guides to look different across markets

- They validate data quality by comparing response depth and diversity across markets, not just completion rates

The teams that consistently produce the best global research insights have stopped asking "how do we translate this study?" and started asking "how do we study this question in each culture?" The difference sounds subtle. In practice, it is the difference between data you can act on and data that leads you astray.

The Bottom Line

Direct translation of research instruments is not a shortcut. It is a trap that produces data shaped more by cultural friction than by actual user experience. Cultural probing requires rebuilding your research approach for each context, not just converting its language.

The good news: the tools for doing this well are getting dramatically better. AI-moderated research that adapts to cultural communication norms is no longer aspirational. But the technology only works when built on a foundation of genuine cultural understanding, not just multilingual capability.

Your global research is only as good as your worst-adapted market. Make cultural probing a first-class concern, not an afterthought, and your cross-cultural insights will actually be worth the investment.