Conversational analysis has always been the most labor-intensive qualitative method. Where thematic analysis requires reading and coding, conversational analysis demands microscopic attention to how people talk -- the pauses, the interruptions, the repair sequences, the way someone reformulates a question before answering it. These micro-level patterns reveal power dynamics, comfort levels, and cognitive processing that no survey can capture.

But the method has been trapped in a paradox. The richer the data, the longer the analysis takes. A single hour-long interview can require eight to twelve hours of manual annotation for a thorough conversational analysis. Scale that to a twenty-participant study and you are looking at months of work for a single researcher.

This is why conversational analysis has remained a niche method despite its analytical power. Product teams that would benefit enormously from understanding how users talk about their problems -- the hesitations, the contradictions, the moments of confusion -- rarely have the time or budget to commission this kind of deep analysis.

AI-powered pattern recognition is changing that equation fundamentally. Not by automating interpretation, but by handling the tedious structural coding that consumes most of the analyst's time.

What Conversational Analysis Actually Reveals

Before discussing how AI accelerates the process, it is worth understanding what conversational analysis captures that other methods miss.

Standard thematic analysis focuses on what people say -- the content, the themes, the topics. Conversational analysis focuses on how people say it. The distinction matters enormously for product research.

Consider a user interview about a project management tool. Thematic analysis might identify that users mention "collaboration" as a key theme. Conversational analysis reveals that every time users discuss collaboration features, they pause, hedge, and qualify their statements -- signals that they find the feature confusing but feel social pressure not to criticize it directly.

Turn-taking patterns expose group dynamics in focus groups that content analysis alone cannot detect. Who speaks after whom, who gets interrupted, who defers to others -- these patterns reveal informal hierarchies and influence networks within organizations. When a junior team member consistently defers to their manager during a usability session, the manager's preferences are drowning out the actual end-user experience.

Repair sequences -- moments where speakers correct themselves or reformulate what they have said -- are gold mines for identifying usability problems. When users say "I would click on... well, actually, I would first look for... no, I think I would..." they are revealing genuine navigational confusion in real-time. This data is infinitely more valuable than a post-hoc rating of "ease of use" on a Likert scale.

The Manual Coding Bottleneck

Traditional conversational analysis follows a rigorous but painfully slow process. The researcher first transcribes the interview using Jefferson notation or a similar system that captures overlaps, pauses, emphasis, and intonation. Then they identify sequences of interest -- openings, closings, topic transitions, trouble spots. Each sequence is coded for its structural properties. Patterns are identified across sequences and across participants.

The transcription alone is a bottleneck. Jefferson notation requires marking pause lengths to the tenth of a second, indicating rising and falling intonation, noting overlapping speech, and capturing non-verbal sounds. A single minute of conversation can take ten minutes to transcribe at this level of detail.

Then comes the coding. Each conversational turn needs to be classified: is it a question, an answer, a repair initiation, a backchanneling signal, a topic shift? Are there adjacency pairs that deviate from expected patterns? Where are the preference structures -- moments where speakers choose between aligned and disaligned responses?

This is the work that AI can accelerate by an order of magnitude. Not the interpretation -- the structural identification. The task of finding patterns across hundreds of conversational turns is fundamentally a pattern recognition problem, and that is precisely where large language models excel.

How AI Pattern Recognition Works in Conversational Analysis

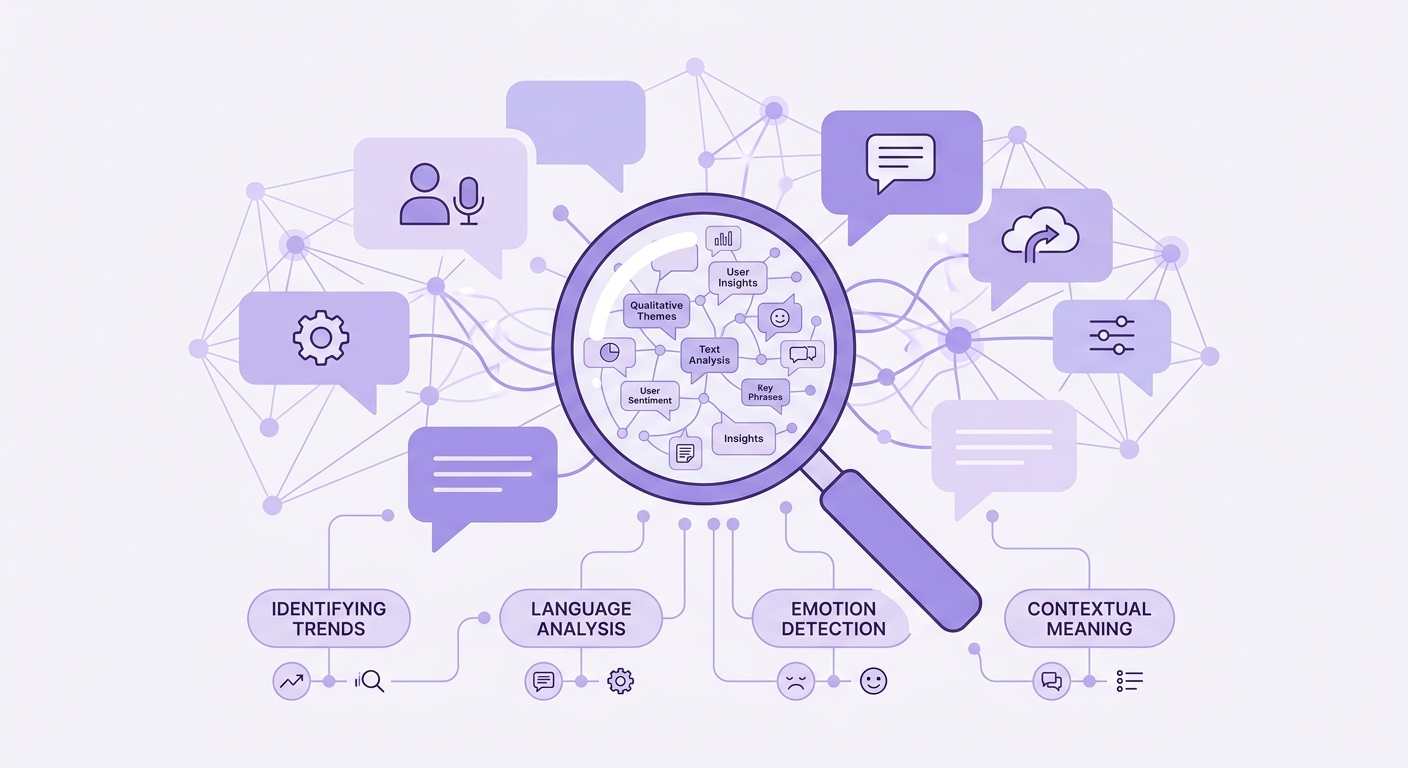

Modern AI-powered conversational analysis operates on multiple levels simultaneously.

At the acoustic level, speech processing models identify pauses, overlaps, emphasis, and prosodic contours. These features are extracted automatically from audio recordings with accuracy that matches trained human coders for most structural features. The days of manually timing pauses with a stopwatch are over.

At the structural level, language models identify turn construction units, sequence organization, and repair mechanisms. The model recognizes when a speaker's turn is complete, when another speaker's entry is competitive versus collaborative, and when a repair sequence has been initiated. This is the bulk of the manual coding work that traditionally consumed weeks of analyst time.

At the thematic level, AI identifies topic trajectories across conversations -- where topics are introduced, how they are developed or abandoned, and which topics consistently generate interactional trouble. Cross-referencing topic patterns with structural patterns reveals which subjects make participants uncomfortable, which generate genuine engagement, and which prompt formulaic responses.

The key insight is that AI handles the annotation while the researcher handles the interpretation. The system identifies that a participant paused for 2.3 seconds before answering a question about pricing, used three repair sequences, and eventually provided a hedged response. The researcher interprets what that pattern means in context -- perhaps the participant is uncertain about the value proposition, or perhaps they are calculating whether the price fits their budget.

As noted in research on how AI is reshaping qualitative research analysis, the goal is not to replace human judgment but to remove the mechanical bottleneck that prevents human judgment from being applied at scale.

Practical Applications for Product Research

The acceleration that AI brings to conversational analysis opens up use cases that were previously impractical.

Usability testing at conversational depth. Instead of just capturing task completion rates and think-aloud commentary, teams can analyze the conversational dynamics of moderated usability sessions. How do users formulate their confusion? Where do they self-correct? When do they abandon a line of action versus persisting? These patterns, analyzed across dozens of sessions, reveal UX problems that task-based metrics miss entirely.

Customer interview programs with pattern tracking. Organizations running continuous discovery programs can now track conversational patterns over time. If customers discussing a particular feature start showing increasing hesitation and repair sequences month over month, that is an early warning signal of growing dissatisfaction -- detectable months before it shows up in NPS scores or churn data.

Competitive research through language patterns. When users discuss competitor products versus yours, conversational analysis reveals emotional engagement that content analysis misses. Fluent, unhedged descriptions indicate genuine enthusiasm. Halting, qualified descriptions suggest ambivalence. These linguistic patterns are more predictive of switching behavior than stated preferences.

The approach aligns with the principles outlined in understanding what questions to ask in user interviews -- but goes further by analyzing not just the answers but the conversational structure around those answers.

Building a Conversational Analysis Workflow

For teams ready to integrate AI-powered conversational analysis into their research practice, the workflow looks different from traditional approaches.

Start with high-quality audio recording. Conversational analysis depends on acoustic features that compressed or noisy recordings destroy. Invest in proper recording equipment and ensure participants consent to detailed audio analysis, not just content transcription.

Use AI transcription with prosodic annotation as the foundation. The transcript should capture not just words but pauses, emphasis, and speaking rate. This annotated transcript becomes the dataset for pattern analysis.

Run structural coding through AI to identify turn types, sequence organization, and repair mechanisms across all interviews simultaneously. This is the step that compresses weeks into hours. The output is a coded dataset that identifies every instance of overlap, repair, topic shift, and preference structure across your entire corpus.

Then apply human interpretation to the coded patterns. This is where the researcher's expertise becomes essential. The AI has identified that participants consistently use dispreferred response formats when discussing your onboarding process. The researcher determines why -- and what that means for product design.

The process mirrors how research agencies are using AI to deliver qualitative projects faster -- not by cutting corners on analysis, but by automating the mechanical work that previously made deep analysis unaffordable.

Cross-Language and Cross-Cultural Analysis

One of the most powerful applications of AI-powered conversational analysis is cross-cultural research. Conversational patterns vary dramatically across cultures -- turn-taking norms, silence tolerance, repair strategies, and preference structures all differ. Manual cross-cultural conversational analysis requires researchers fluent in both the language and the conversational norms of each culture studied.

AI models trained on multilingual conversational data can identify structural patterns across languages while flagging culturally specific features for human interpretation. A pause of three seconds might indicate discomfort in American English conversation but be a normal thinking pause in Finnish interaction. The AI identifies the structural pattern; the culturally informed researcher interprets its significance.

This capability is particularly valuable for global product teams conducting research across markets. Understanding how users in different cultures talk about your product -- not just what they say, but how they say it -- provides competitive intelligence that no localization team can offer through translation alone.

The Interpretation Layer Remains Human

It is worth emphasizing what AI does not replace in conversational analysis. The interpretive framework -- whether conversation analysis, discourse analysis, or interactional sociolinguistics -- remains a human domain. AI identifies that a participant used a particular conversational structure. The analyst determines what that structure accomplishes in context.

This division of labor is actually more rigorous than traditional manual coding in one important way: it reduces coder bias. When a human coder manually identifies and codes conversational features, their theoretical orientation influences what they notice. AI coding identifies all instances of a structural feature, not just the ones the researcher was predisposed to find.

The result is conversational analysis that is both faster and more comprehensive. Researchers spend their time where human judgment matters -- in interpretation and theory-building -- rather than in the mechanical work of identifying and counting conversational features.

For research teams considering this approach, the combination with building a research repository that teams actually use creates a powerful feedback loop. Conversational patterns stored in a searchable repository become reusable assets -- future researchers can query for specific interactional patterns across the entire history of organizational research.

Moving Forward

Conversational analysis has been underused in applied research not because it lacks value, but because its cost was prohibitive. AI pattern recognition removes the cost barrier without removing the analytical depth.

The teams that adopt this approach first will have a significant advantage. While competitors rely on surface-level content analysis of their user interviews, these teams will understand the conversational dynamics that reveal genuine user sentiment, hidden usability problems, and unspoken needs.

The richest qualitative data has always been in how people talk, not just what they say. AI finally makes it practical to listen at that level of detail.