The Invisible Weight of First Exposure

Every research session begins with a first impression. The first screen a participant sees, the first question you ask, the first prototype you present -- each creates an anchor that silently shapes every subsequent response. This is not a subtle effect. In controlled studies, anchoring bias shifts participant evaluations by 20-40% depending on the domain and the strength of the initial anchor.

The anchoring effect, first documented by Tversky and Kahneman in 1974, describes our tendency to rely disproportionately on the first piece of information encountered when making judgments. In user research, this means your study design is not merely collecting data -- it is actively manufacturing it. The order in which you present stimuli, the framing of your first question, even the visual design of your consent form creates anchors that contaminate downstream findings.

This is not a problem you can solve by "being aware of it." Awareness does not eliminate cognitive bias. Only structural methodological choices -- counterbalancing, randomization, careful sequencing -- can mitigate the damage. And most research teams skip these steps because they do not understand the magnitude of what they are losing.

How Anchoring Operates in Research Contexts

Anchoring in user research manifests through several mechanisms, each compounding the others:

Numeric anchoring in rating scales. When participants see a product rated 4.5 stars before evaluating it themselves, their own ratings cluster around that number. This applies to any numeric context: if your screener asks "How many hours per week do you use this product (most users report 5-10 hours)?" you have anchored every response to that range. Participants who use it 2 hours will report 4. Participants who use it 15 hours will report 12. The anchor pulls responses toward itself like gravity.

Conceptual anchoring through framing. The way you describe a product before showing it creates expectations that function as anchors. Telling participants "this is our new premium experience" versus "this is an early prototype" changes their evaluative framework entirely. The first frame anchors them to judge against premium standards; the second anchors them to forgive flaws.

Sequential anchoring in comparative studies. When participants evaluate multiple designs, the first design becomes the reference point. Design B is not evaluated on its own merits -- it is evaluated as "better or worse than Design A." This is why A/B preference tests where A always comes first produce systematically biased results. The question order effects documented in survey methodology are a direct manifestation of sequential anchoring.

Anchoring through task context. Even the scenario you set before a task creates anchors. "Imagine you are buying a gift for a friend" versus "Imagine you are buying something for yourself" anchors participants to different price sensitivity thresholds, different feature priorities, and different success criteria.

The Compounding Problem: Anchors Stack

What makes anchoring particularly dangerous in research is that anchors do not exist in isolation. They compound. Your participant is simultaneously anchored by:

- The recruitment screener questions (which primed certain mental models)

- The consent form language (which set expectations about the study)

- The moderator's introduction (which framed the relationship)

- The first task or question (which established the evaluative baseline)

- Each subsequent exposure (which was judged relative to all prior anchors)

By the time you reach your critical research questions -- the ones that actually inform product decisions -- your participant is operating within a web of anchors that may have little to do with their authentic experience of your product. You are not measuring their opinion. You are measuring the opinion that your study design constructed for them.

This is why replication of qualitative findings across studies with different designs often produces contradictory results. Teams blame "different user segments" or "market changes," but frequently the real explanation is different anchoring structures producing different manufactured opinions.

Structural Mitigation: What Actually Works

Mitigating anchoring requires structural changes to study design, not just moderator awareness. These approaches have empirical support:

Latin square counterbalancing. For comparative evaluations, use a Latin square design where each condition appears in each position equally across participants. If you are testing three designs, you need at least six participants (one per order permutation) before you have a single balanced data point. Most teams test five participants total and declare a winner -- this is not research, it is anchoring bias with extra steps.

Anchoring-aware question sequencing. Move from general to specific, from open to closed, from unaided to aided. Never begin with a specific stimulus and then ask for general opinions -- you have already contaminated the general with the specific. Just as eval-driven approaches to testing require careful sequencing of test cases to avoid interference, research requires careful sequencing of stimuli.

Neutral baseline establishment. Before showing any design or prototype, establish participants' current mental models through unaided recall. What do they think of when they think of this product category? What are their unprompted expectations? Document this before introducing any anchors. This gives you a pre-anchor baseline against which you can measure the anchoring effect itself.

Randomized stimulus presentation. When using research platforms that support it, randomize the order of stimuli presentation for each participant. This does not eliminate anchoring -- each individual is still anchored by whatever they see first -- but across your sample, the anchoring effects average out rather than systematically biasing toward one condition.

Delayed evaluation protocols. Instead of asking for reactions immediately after exposure (when the anchor is strongest), introduce a buffer task between exposure and evaluation. Even a 2-minute distractor task reduces anchoring effects by 15-25%. The anchor decays over time; use that decay strategically.

The Moderator as Anchor

Researchers themselves are anchoring agents. Your verbal and non-verbal reactions to participant responses create anchors for subsequent responses. A slight nod when a participant mentions a feature tells them that feature is "correct" to discuss, anchoring all subsequent conversation toward it.

This extends to probe questions. "That is interesting -- tell me more about the navigation" anchors the participant to believe navigation is what matters in this study. Contrast this with "Tell me more about that" -- which maintains neutrality but sacrifices specificity.

The solution is not robotic neutrality (which itself creates an anchor of formality that suppresses natural response). The solution is systematic probe protocols that vary the topics you explore across participants, ensuring that no single topic receives consistent moderator-anchored emphasis. The context engineering principles used in AI system design apply here: the context you construct determines the output you receive.

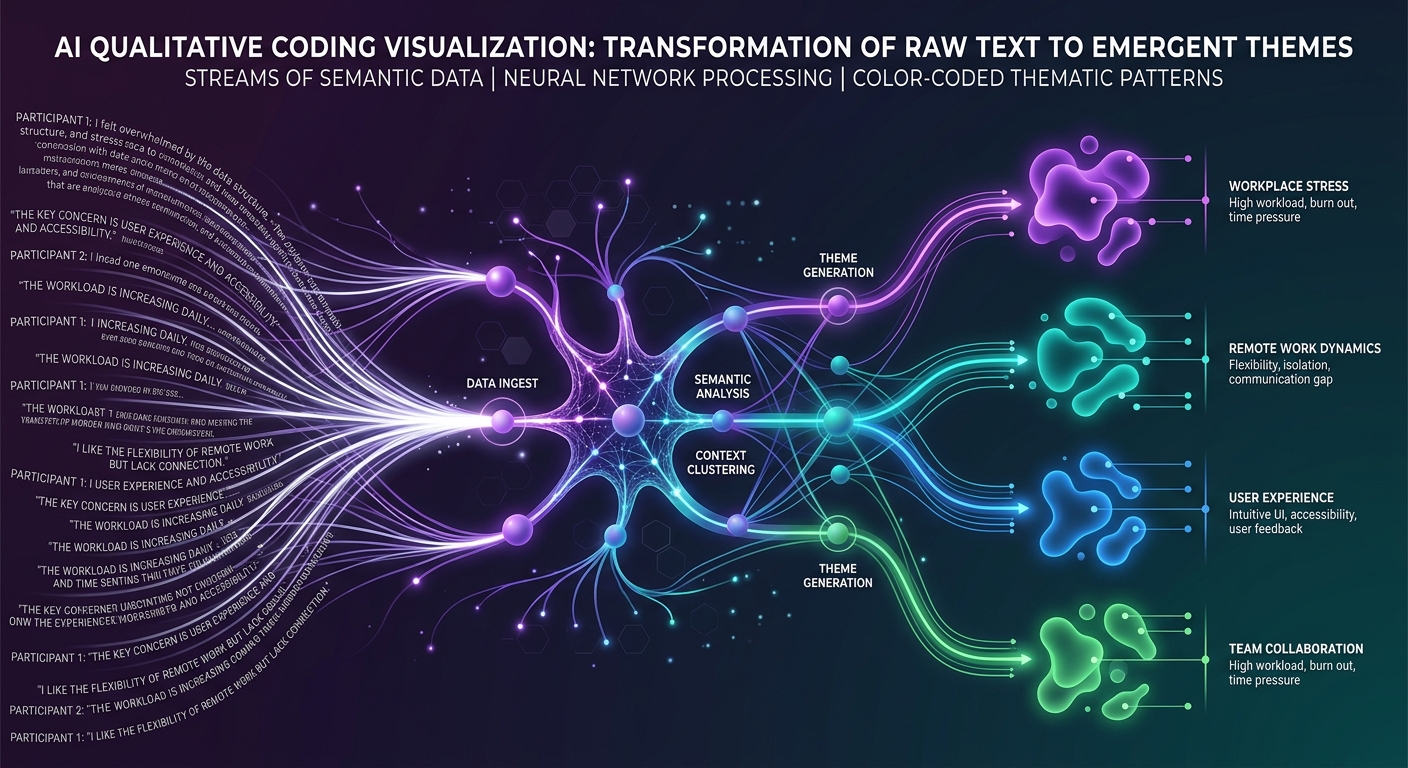

Detecting Anchoring in Your Existing Data

You can audit past studies for anchoring contamination by examining:

Response clustering around first exposure. If ratings or preferences cluster suspiciously around whatever was shown first, anchoring is likely operating. Plot responses by presentation order -- if first-position items consistently receive different ratings than later-position items, your data is anchored.

Diminishing differentiation. When participants see many options in sequence, later options often receive less differentiated responses ("it is about the same as the last one"). This is anchoring combined with fatigue -- participants are using earlier exposures as reference points rather than evaluating independently.

Language mirroring. If participants use language from your task descriptions, screener questions, or early prompts in their later responses, they are anchored to your framing rather than generating independent descriptions of their experience.

Premature convergence. If all participants in a study converge on similar opinions despite diverse backgrounds, check whether your study design anchored them toward convergence through shared early exposures. True opinion diversity is the norm; false consensus is a sign of shared anchoring.

Building Anchoring-Resistant Research Programs

The goal is not to eliminate anchoring -- that is impossible for human cognition. The goal is to build guardrails that prevent systematic errors from compounding across studies and decisions. A mature research program treats anchoring the way a mature engineering program treats technical debt: as an inevitable force that must be actively managed rather than ignored.

Practical steps for your research program:

Audit study designs before fielding. For every study, identify the first three things a participant will encounter and ask: how might these anchor subsequent responses? Document your anchoring risks alongside your research questions.

Vary your designs across waves. If you run the same study quarterly, change the presentation order each wave. If results shift with order changes, you know prior findings were partially anchored rather than purely reflective of user opinion.

Report anchoring limitations. Every research report should include a section on potential anchoring effects from the study design. This is not weakness -- it is methodological integrity that builds long-term trust in research findings.

Cross-validate with unanchored methods. Complement structured research (which always contains anchors) with unstructured observation, behavioral analytics, and unmoderated studies where participants encounter stimuli in their natural context rather than your manufactured one.

The Stakes of Ignoring Anchoring

Teams that do not account for anchoring bias make product decisions based on manufactured preferences rather than authentic user needs. They optimize for the opinion their study design created, not the opinion that exists in the wild. The result is products that test well in research and underperform in market -- a pattern so common that many organizations have lost faith in user research entirely.

The problem was never research itself. The problem was research that did not account for its own influence on the data it collected. Anchoring is not a minor methodological footnote. It is a fundamental force that shapes every finding you will ever produce. Treat it accordingly.